Intel recently pushed the boundaries of its’ Artificial Intelligence (AI) program. The global giant updated several new products to accelerate AI system design, development as well as deployment from cloud to edge devices. It started by showcasing the much talked about Intel® Nervana Neural Network Program (NNP) for inference and training purposes. The NNP-T1000 chip will be taking care of training related tasks while NNP-1000 chip caters inference requirements.

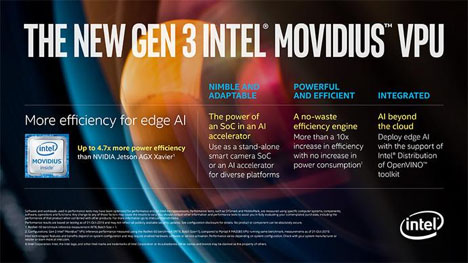

Further in the product line was its first purpose-built Application-Specific Integrated Circuits (ASICs) for most complicated deep learning tasks with flawless efficiency and scale for cloud and data centre customers. Intel also revealed the next-generation Intel® Movidius™ VPU (Vision Processing Unit) for edge media, inference applications, and computer vision.

Significance

All these newly released products expand the portfolio of Intel’s AI solutions. The firm estimates income worth $3.5 billion within this year. Intel’s AI portfolio will help customers with AI model development and deployment at all scales ranging from massive clouds to extremely tiny edge devices and everything in between.

Advancement in deep learning reasoning and context demands very complex data, models as well as techniques. It also generates the need for some different architectures. As most part of the world is using Intel’s AI on Intel® Xeon®™ Scalable processors. Intel is consistently modifying the platform with new add-ons like Intel® Deep learning Boost with VNNI (Vector Neural Network Instruction) that ensures improved AI interference performance across the edge and data centre deployments. It will act as a robust AI base for years to come. Some of the most advanced deep learning training needs to double up performance in every 3.5 months. Any further developments in this direction are possible only through a portfolio as expanded as of Intel.

In Maker’s words

Naveen Rao, the vice-president and general manager of Intel’s Artificial Intelligence Products Group, states, “With this next phase of AI, we’re reaching a breaking point in terms of computational hardware and memory. Purpose-built hardware like Intel Nirvana NNP’s and Movidius VPUs are necessary to continue the incredible progress in AI. using more advanced forms of system-level AI will help us move from the conversion of data into information toward the transformation of information into knowledge.”

Filed Under: Components, News

Questions related to this article?

👉Ask and discuss on Electro-Tech-Online.com and EDAboard.com forums.

Tell Us What You Think!!

You must be logged in to post a comment.