Cache is term which is common heard today. With exponential advancement in field of faster processors popping up every day, the usage of this terminology has increased rapidly. This has also been the most major parameter in faster processing, but what is cache actually. Is it processing unit or memory? What is L1, L2 and L3 cache? To understand this first we need to have insight about how CPU works or how it processes. So let us take a look.

MEMORY ORGANIZATION

CPU – Central Processing Unit is just like brain of a computer; and performs the arithmetical, logical operations of the system by carrying instructions on the code.

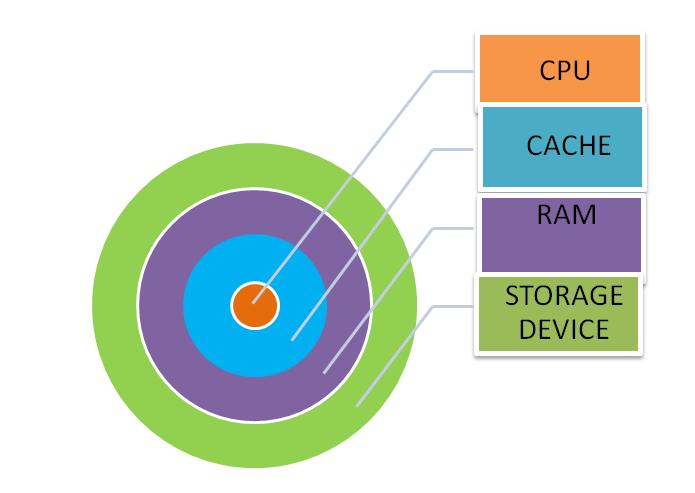

The memory organization of a system is shown below:

At the core is CPU, and then are cache, then RAM and then storage device.

But how do these work?

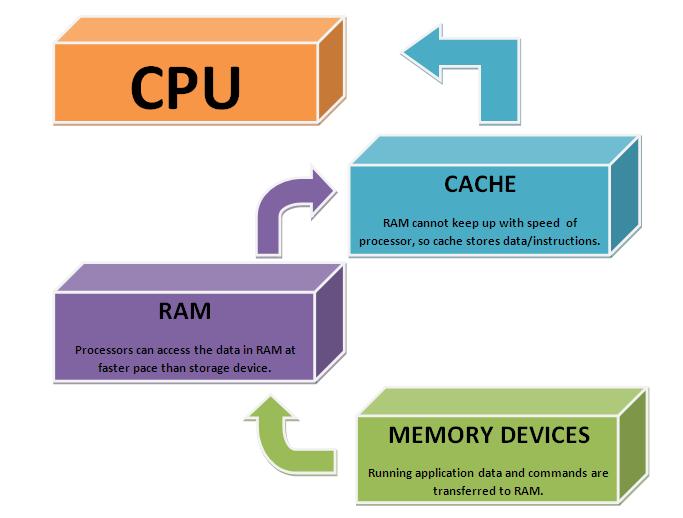

When an application starts or data is to be read/written or any operation is to be performed then the data and commands associated with the specific operation are shifted from a slow moving storage device (magnetic device – hard disk, optical device – CD drive etc.) to a faster device. This faster device is RAM – Random Access Memory. This RAM is type of DRAM (Dynamic Random Access Memory). RAM is placed here because it is a faster device, and whenever data/ commands/instructions are needed by Processor, they provide them at a faster rate than slow storage devices. They serve as a cache memory for the storage devices. Although they are much faster than slow storage device but the processor processes at much a faster pace and they are not able to provide the needed data/instructions at that rate. So there is need of a device that is faster than RAM which could keep up with the speed of processor needs. Therefore the data required is transmitted to the next level of fast memory, which is known as CACHE memory. CACHE is also a type of RAM, but it is Static RAM – SRAM. SRAM are faster and costlier then DRAM because it has flip-flops (6 transistors) to store data unlike DRAM which uses 1 transistor and capacitor to store data in form of charge. Moreover they need not be refreshed periodically (because of bistable latching circuitry) unlike DRAM making it faster.

Part II

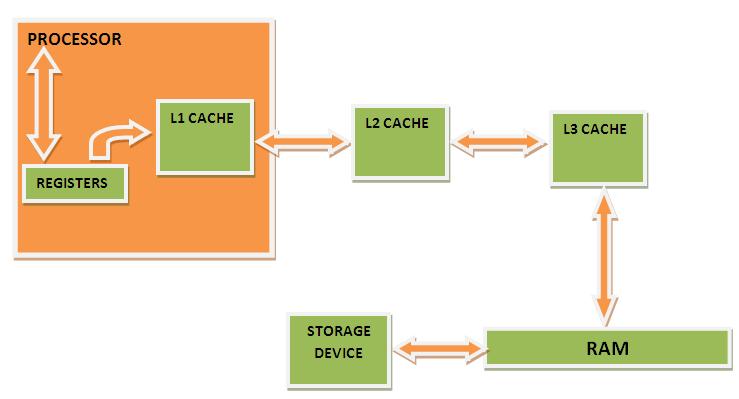

This memory is generally divided into different level. In the following figure we see the process flow:

Let us suppose that the system has cache of three levels (level means that overall cache memory is split into different hardware segments which vary in their processing speed and memory). From RAM data is transferred into cache of 3rd level (L3 cache). L3 cache is a segment of overall cache memory. L3 cache is faster than RAM but slower then L2 cache. To further fasten up the process cache of second order L2 cache are used. They are located at immediate vicinity of processor. But in some of the modern processors L2 cache is inbuilt making the process faster. It should be noted that it is not necessary that a system has 3 levels of cache; it might have 1 or 2 level of cache. At the core level is cache of first level that is L1 cache memory. The commonly used commands/instructions/data is stored in this section of memory. This is built in the processor itself. Thus this is fastest of all the cache memory.

PROCESS FLOW

So whenever the processor needs to perform an action or execute any command then it first checks the state of the data registers. If the required instruction/data is not present over there, then it looks in the first level of cache memory – L1, and if there also data is not present it further goes to second and further third level of cache memory. Whenever the data needed by processor is not found in the cache it is known as CACHE MISS and it leads to delay in the execution thus making the system slow. If the data is found in cache memory it is known as CACHE HIT.

If the data needed is not found in any of the cache memory, the processor checks in RAM. And if this also fails then it goes to look onto the slower storage device.

So the above process can be graphically summarized as:

Part III

CACHE CONTROLLER (Best part of all)

So we can say that cache is used to make the system fast by making the processing/execution fast. But that is not the end of cache. The question arises that how does cache knows that piece of data or commands/instructions are important and in near future processor might ask about it. The answer is cache controller that is associated with cache. It implements the following 2 principles upon cache:

· First Case: Cache is used to hold the instructions/data which are very commonly used or computer uses frequently.

· Second case: Cache is used to read the likely data; that is data which is to be most probably read in near future.

Let us understand these by example.

Take the first case. Consider an example. You are studying a subject and solving numerical related to a topic.

· Now to solve the 1st numerical you need a formula, so you open chapter 1 of the book and look at the formula and then solve the numerical.

· Now you move on to next numerical. To solve 2nd numerical you again need to access the chapter 1 and look at the formula.

· Suppose this goes on…

The better way will be to write the formula on a piece of paper and pin it on the desk. This will save time and speed up the process. This is how cache controller works hence making the most probable data to be present in cache even before the processor asks for it.

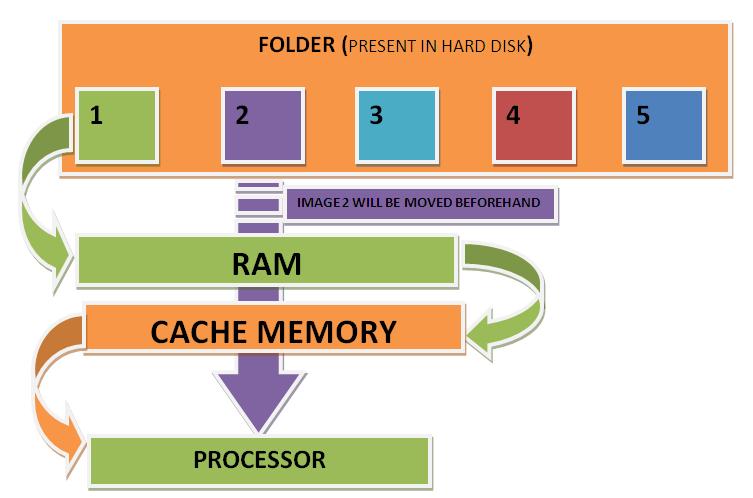

Let us consider the second case. This is just an illustration to understand the case. Let us say that a user opens a folder which has 5 images named 1,2,3,4 and 5. Let us say a person opens 1 image.

Now the controller will judge the probability of user opening image 2 and hence will beforehand move image 2 in cache, hence making a cache hit, making execution fast.

CONCLUSION

So we can say that Cache memory is a high-speed random access memory which is used by a system Central processing unit for storing the data/instruction temporarily. It decreases the execution time by storing the most frequent and most probable data and instructions “closer” to the processor, where the systems CPU can quickly get it.

Filed Under: How to

Questions related to this article?

👉Ask and discuss on Electro-Tech-Online.com and EDAboard.com forums.

Tell Us What You Think!!

You must be logged in to post a comment.