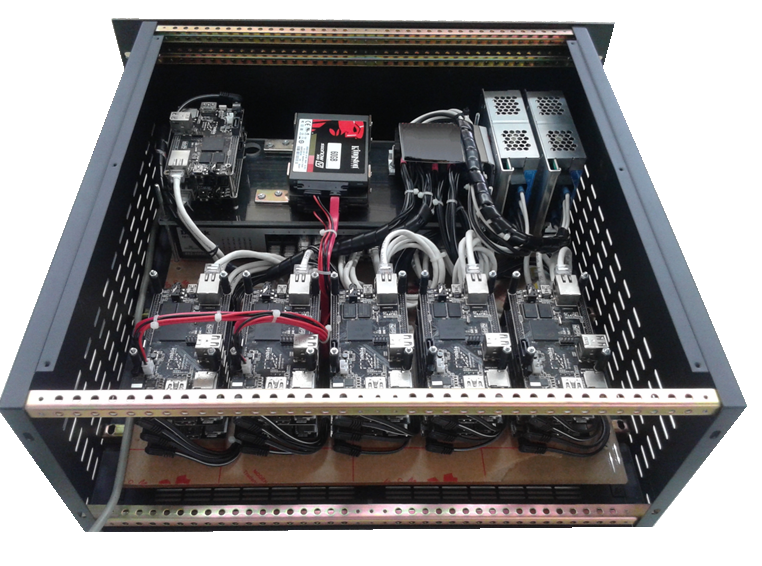

Figure 1: Spark Hadoop ARM Cluster

Heng Yan posted this experimental project where he has prototyped an ARM-based cluster, designed to process Big Data. It’s a 22-node Cubieboard A10 with 100 Mbps Ethernet.

The designing of an ARM chip does not allow processing of Big Data but it is gradually becoming powerful enough to do so. Several attempts have been made to run Apache Hadoop on top of an ARM cluster and many more experiments are taking place successfully. But still the question arises that whether it is feasible to do Big Data on a low-cost ARM cluster?

The doubt arises because of the disk with slow I/O and networking of ARM SoCs, Hadoop’s MapReduce that will really not be able to process a real Big Data computation, which average 15GB per file.

Figure 2: Cluster running of Spark and Hadoop

The cluster running of Spark and Hadoop may solve the answer. As Hadoop’s Map Reduce is not a good choice to process on this kind of cluster, so only HDFS is used and an alternative was tried to found to stumble upon Apache Spark. It is important to know that Spark is an in-memory framework which optionally spills intermediate results out to a disk when a computing node is running out-of-memory and luckily it runs fine on the cluster.

The cluster has total 20GB of RAM and only 10GB is available for data processing. In case, larger amount of memory when tried to allocated, some nodes will die during the computation.

But the cluster here is good enough to crush a single, 34GB, Wikipedia article file from the year 2012. This was possible after a tweaked word count program was ran in Spark’s shell and waited for few minutes and finally the cluster answered the word count.

The designing consisted of 20 Spark worker nodes, and 2 of them running Hadoop Data Nodes that allowed us to understand that the data locality of Spark/Hadoop cluster. The Hadoop’s Name Node and the Spark’s master node were ran on the same machine, whereas another machine was the driver.

This could be said as success as an ARM system-on-chip board has demonstrated an enough power to form a cluster and process non-trivial size of data. Still, there are missing puzzle-pieces to be found and the need to choose the right software package. Attempts are being made to develop a new one, bigger by CPU cores with small size.

The demonstration of this project is available on the following website-

Filed Under: Reviews

Questions related to this article?

👉Ask and discuss on Electro-Tech-Online.com and EDAboard.com forums.

Tell Us What You Think!!

You must be logged in to post a comment.