Humans live in an analog world which they study and understand as a digital world.

Humans collect information about the nature and organize it as ‘science’. The very foundation of science i.e. organized knowledge about understanding of nature and natural phenomena, is based on language and mathematics. Any branch of science involves identification of entities, their attributes, associated events and mathematical analysis of those attributes and events. Such structural analysis of nature and natural phenomena begins with quantification of physical things and their properties, i.e. representation by name and properties of things and events as discrete information (words) and measurement of every possible property in numbers. This is the basic nature and method by which the humans explore the world.

The electronics too have no different way to represent, maintain and analyze information. Any information can be either discrete like names of things, events and properties or can be continuous which then require measurement by comparison to a standard unit, quantification and representation in numbers.

The representation of discrete information requires use of a language. For representing discrete information like names of things, events and properties, any language has a set of symbols which are organized in a fixed and unique order and pronounced and written by a set of rules, so that a thing, event or its property is identified by a unique name or word.

The representation of continuous information requires use of mathematics. For representing continuous information like most of the physical quantities, a unit is decided with which the continuous entity or event is compared and the continuous information is represented as a number followed by the unit.

So, to represent any information in this world, it is first required to have a set of symbols and digits. The symbols to form words (names like names of things, their properties, events and units) and digits to form numbers. Like humans did so by using language and mathematics, in electronics, it is done with digital electronics.

Digital electronics deals with circuits that can manipulate only two levels of voltage or current. These two levels of voltage or current are called High and Low logic. The High logic refers to the presence of full power supply at a place in circuit and Low logic refers to absence of voltage, current or power at a place in circuit. Since, it is not ideally possible to achieve fixed voltage levels, there is some tolerance accepted. The Transistor – to – Transistor Logic which is used for designing and fabrication of digital integrated circuits recognize any voltage between 2 V to 5V as input signal level (at a TTL Gate) and 2.7 V to 5 V as output signal level (from a TTL Gate) as High logic and any voltage between 0 V to 0.8 V as input signal level (at a TTL Gate) and 0V to 0.5 V as output signal level (from a TTL Gate) as Low logic. In writing, High logic is represented as 1 and Low logic is represented as 0. The smallest symbol to represent information in digital electronics is a bit. A bit can have either a High state or a Low state. A set of four bits is called nibble. A nibble can represent maximum 16 symbols i.e. 2^4. A set of eight bits is called byte. A byte can represent 256 symbols i.e. 2^8. The multiple number of bytes can be used to represent more number of symbols, digits or symbols and digits. These digitally expressed symbols and digits can then be used to represent discrete information (as words) as well as measurable quantities (as numbers).

Fig. 1: Representational Image of Digital Electronics

So, the digital electronics is all about electronic circuits that operate and manipulate on two voltage levels (HIGH and LOW) and can perform numerical and logical operations on these two voltage levels to actually process real world information (represented symbolically as bits and bytes) for mathematical and analytical study. Since, the digital circuits represent every information in bits which can have a value from set of two voltage states (and that can be represented mathematically by two numbers 0 and 1), digital circuits and systems are binary systems. The set of symbols (to represent information) is referred as encoding scheme or code system in digital electronics as well as (so in) computer systems.

For convenience, any symbol can be represented as a number and the set of symbols and digits to represent information in a code system can be expressed as a set of mathematical numbers. Every number in a code system or encoding scheme refers to a unique symbol or digit and can be expressed in binary form i.e. in the form of bits or bytes.

There are many number systems in use, commonly decimal, octal and hexadecimal. The numbers in any number system can be converted to binary system and so represented in binary form, and so in digital electronic form. The digital circuits are used in every application from computers, telephony, data processing, radar navigation, medical instruments and consumer products wherever computing of some sort of information is required.

So, this series on digital electronics begins with introduction of number systems and their conversion into binary numbers. Remember, numbers represent symbols and digits in a code system, then symbols and digits represent information and operations on that information. so let’s begin with understanding number systems.

Number Systems –

Numbers are a way to represent count of things or a continuous quantity compared to a standard unit. There have been different number systems of which decimal number system is the most common. Number system refers to mathematical notation of numbers by a set of digits (symbols). The digits themselves are special symbols representing numbers. A number system is identified by its base or radix. In any number system, a number is represented by positional notation of the digits. Once the count is increased beyond the base or radix at a position in the numerical notation, number in the next position in the numerical notation is increased. Let us understand this by different number systems –

1) Decimal Number System – The decimal number system consists of ten digits from 0 to 9. These digits can be used to represent any numeric value, where 10 is used as the base of the decimal number system. Each number in the decimal number system consists of digits which are located at different positions. In the decimal number system, each integer number column has values of units, tens, hundreds, thousands etc as move along the number from right to left. Mathematically, these numbers are written as

Fig. 2: Positive Powers of 10 in Decimal System

, . In this, left of the decimal point represents the increased positive power of 10. Likewise for the fractional number part, the weight of the number becomes more negative as moved from left to right as

Fig. 3: Negative Powers of 10 in Decimal System

.

The value of any decimal number will equal to sum of its digits multiplied by their respective weights. For example, if N = 7245 in a decimal format is equal to

7000 + 200 + 40 + 5

Where it can also be written as,

Fig. 4: Weights of a decimal number

From the above example, in the decimal numbering system where the left most bit is the most significant bit (MSB) and the right most bit is the Least significant bit (LSB).

The decimal number system is the number system adopted worldwide for mathematical computation due to its ease of use. With base 10, it is easy to perform arithmetic operations in this number system as it is easy to remember up to 10 symbols (digits) for representing numbers and in writing, the computation on decimal numbers becomes easy due to simple positional increment and decrement. It is also believed that because humans have 10 fingers, the number system with base 10 became obvious to use.

Though, the popularity of decimal system should be accredited to the concept of zero. The zero was first introduced in the decimal system only. It was a revolutionary concept to represent void or nothing. The Zero allowed representing no weight at a position in a numerical notation of a number. This greatly eased performing arithmetic operations on numbers (particularly multiplication) which was probably beyond imagination before that.

2) Binary Numbers – The binary number system is simple because it consists of only two digits, i.e. 0 and 1. Just as the decimal system with its ten digits is a base-ten system, the binary system with its two digits is a base-two digit is a base-two system. The position of 0 or 1 in a binary number indicates its “weight” within the number. In a binary number, the weight of each successively higher position to the left is an increasing power of two.

In the binary numbering system, a binary number such as 101100101 is expressed with the string of 1’s and 0’s with each digit along the string moving from right to left having a value twice that of the previous digit. But as it is a binary digit it can only have a value of either 1 or 0 therefore, q is equal to 2 with its position indicating its weight within the string.

As seen in the decimal number system, the weight of each digit to the left increases by 10, also in the binary number system, the weight of each digit increases by factor of 2. The first digit has the weight of 1(20), second digit has the weight of 2 (21), third digit has the weight of 4(22) and the fourth digit has the weight of 8 (23).

Fig. 5: Decimal Equivalents in Binary Number System

In the digital system, each of the binary digits is called a bit and group of 4 and 8 bits are called as nibble and a byte respectively. The highest decimal number that can be represented by n – bits binary number is 2n – 1 (beginning with zero). Thus, with an 8-bit binary number, the maximum decimal number that can be represented is 28 – 1 = 255.

The binary numbers are important as they represent a number in 0 or 1. This way they represent a number in bits (or bytes) which is the form used by digital electronic circuits.

3) Octal Numbers – The octal number system uses the digits 0, 1, 2, 3, 4, 5, 6 and 7. The base of the octal system is eight. Each significant position in an octal number has a positional weight with the least significant position has a weight of 80. Higher significant positions are given weight in the ascending process of eight . The octal equivalent of a decimal number can be obtained by dividing a given decimal number by 8 repeatedly, until a quotient of 0 is obtained.

The octal numbers are important in their own way. By using octal numbers, a binary number represented in bytes can be written and expressed as set of three digits in octal number system. The octal numbers were of great use to represent binary numbers in concise form in some early computers that used 12-bit, 24-bit and 36-bit words. A 12-bit word can be easily represented by a four-digit octal number where each digit of octal number represents the three digits of binary form of the 12-bit word. Like, a 12-bit word could have maximum decimal value – 4095 which can be represented in octal number system as 7777. The ‘7-7-7-7’ is concise representation of 12-bit word – 111-111-111-111 where each octal digit can be directly converted to binary form.

4) Hexadecimal Numbers – The Hexadecimal number system has a base of 16 and uses 16 symbols, namely 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, A, B, C, D, E and F. The symbols A, B, C, D, E and F represents the decimals 10, 11, 12, 13, 14 and 15 respectively. Each position in a hexadecimal number has a positional weight. The least significant position has a weight of 160. The higher significant positions are given weights in the ascending powers of sixteen 161, 162 ,163 .

When the computers started using 8-bit, 16-bit and 32-bit words, the hexadecimal numbers become a way to concisely represent their binary representation. Like, a 8-bit word can have maximum value – 255 which in hexadecimal can be represented as FF. The ‘F-F’ is concise representation of 8-bit word – 1111-1111 where each hexadecimal digit can be directly converted to binary form.

Number System Conversions –

Humans use decimal numbers and computers use binary numbers. So, it is useful to convert decimal numbers into binary numbers, octal numbers (concise representation of 12-bit, 24-bit and 36-bit binary words) and hexadecimal numbers (concise representation of 8-bit, 16-bit, 32-bit binary words). The binary numbers, octal numbers and hexadecimal numbers may also sometimes need to be converted to decimal numbers. The octal numbers and hexadecimal numbers may also sometimes need to converted to binary numbers and vice versa.

1) Decimal to Binary Conversion – An easy method of converting a decimal number into a binary number is by dividing the decimal number by 2 progressively, until the quotient of zero is obtained. The binary number is obtained by taking the remainder after each division in the reverse order. The procedure for decimal to binary conversion is described in the following example –

Converting the decimal number 53.625 into equivalent binary number –

The decimal number 53.625 has two parts – Integer (53) and Fraction ( 0.625).

· Integer conversion:

Division Remainder

2) 53

2) 26 1

2) 13 0

2) 6 1

2) 3 0

2) 1 1

2) 0 1 à MSB

Reading the remainder from the bottom to gives the binary equivalent. Thus (53)10 = (110101)2.

· Fractional Conversion:

If the decimal number is fraction, its binary equivalent is obtained by multiplying the number continuously by 2, Noting down the carry in the integer position each time. The carries in the forward order give the required binary number.

Multiplication Integer

0.625 x 2 = 1.25 1 àMSB

0.250 x 2 = 0.50 0

0.500 x 2 = 1.00 1

0.000 x 2 = 0.00 0

Further multiplication by two is not possible since the product is zero. The binary equivalent is obtained by reading the carry terms from the top to bottom . Thus, (0.625)10 is (0.101)2. The combined number will give the binary equivalent as (53.625)10 = (110101.101)2.

2) Decimal to Octal Conversion – The decimal to octal conversion can be done in the following way –

For example, to convert (444.456)10 to an octal number,

Integer conversion:

Division Remainder

8) 444

8) 55 4

8) 6 7

8) 0 6

Reading the remainders from bottom to top, the decimal number (444)10 is equivalent to octal (678)8.

Fractional conversion:

Multiplication Integer

0.456 x 8 = 3.648 à 3

0.648 x 8 = 5.184 à 5

0.184 x 8 = 1.472 à 1

0.472 x 8 = 3.776 à 3

0.776 x 8 = 6.208 à 6

The process is terminated when significant digits are obtained. Thus, the octal equivalent of (444.456)10 is (674.35136)8.

3) Decimal to Hexadecimal Conversion – The hexadecimal number can be obtained by dividing the given decimal number by 16 repeatedly. For Example –

Converting (115)10. to hexadecimal number,

Division Remainder

16) 115 –

16) 7 3

16) 0 7

Reading the remainders from bottom to top, the decimal number (115)10 is equivalent to the hexadecimal (73)16.

4) Binary to Decimal Conversion – A binary number can be converted into a decimal number by multiplying the binary numbers 1 or 0 by their weight and adding the products. For example, Converting the binary number (101111.1101)2 into its decimal equivalent can be done as follow –

Fig. 6: Image showing Binary to Decimal Conversion

Therefore (101111)2 can be written as (47)10. Total = 47

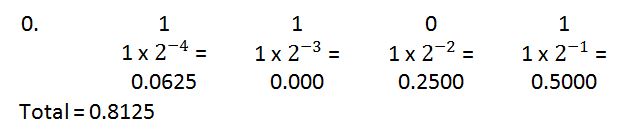

Conversion of (0.1101)2 is done as follow –

Fig. 7: Image showing Binary to Decimal Conversion of fraction

Therefore, (0.1101)2 is equal to (0.8125)10. And so, (101111.1101)2 is equal to (47.8125)10. Total = 0.8125

5) Octal to Decimal Conversion – The conversion from an octal to decimal number can be done by multiplying each significant digit of the octal number by its respective weight and adding the products. For example, the octal number (237)8 can be converted to decimals as follow –

(237)8 = 2 x 22 + 3 x 21 + 7 x 20

= 2 x 64 + 3 x 8 + 7 x 1

= 128 + 24 + 7

= (159)10

6) Hexadecimal to Decimal Conversion – The conversion from a hexadecimal to a decimal number can be carried out by multiplying each significant digit of the hexadecimal by its respective weight and adding the products. For example, the A3BH hexadecimal number can be converted into decimal number as follow –

A3BH = (A3B)16 = A x 162 + 3 x 161 + B x 160

= 10 x 22 + 3 x 21 + 11 x 20

= 10 x 256 + 3 x 16 + 11 x 1

= 2560 + 48 + 11

= (2619)10

7) Octal to Binary and Binary to Octal Conversion – The Octal to Binary conversion and Binary to Octal Conversion is useful when 12-bit, 24-bit or 36-bit binary words have to be represented. Each octal digit is direct representation of three binary digits of the binary number. For example, 7777 is equivalent to 111-111-111-111.

8) Hexadecimal to Binary and Binary to Hexadecimal Conversion – The Hexadecimal to Binary and Binary to Hexadecimal Conversion is useful when 8-bit, 16-bit or 32-bit binary words have to be represented. Each hexadecimal digit is direct representation of four binary digits of the binary number. For example, FF is equivalent to 1111-1111.

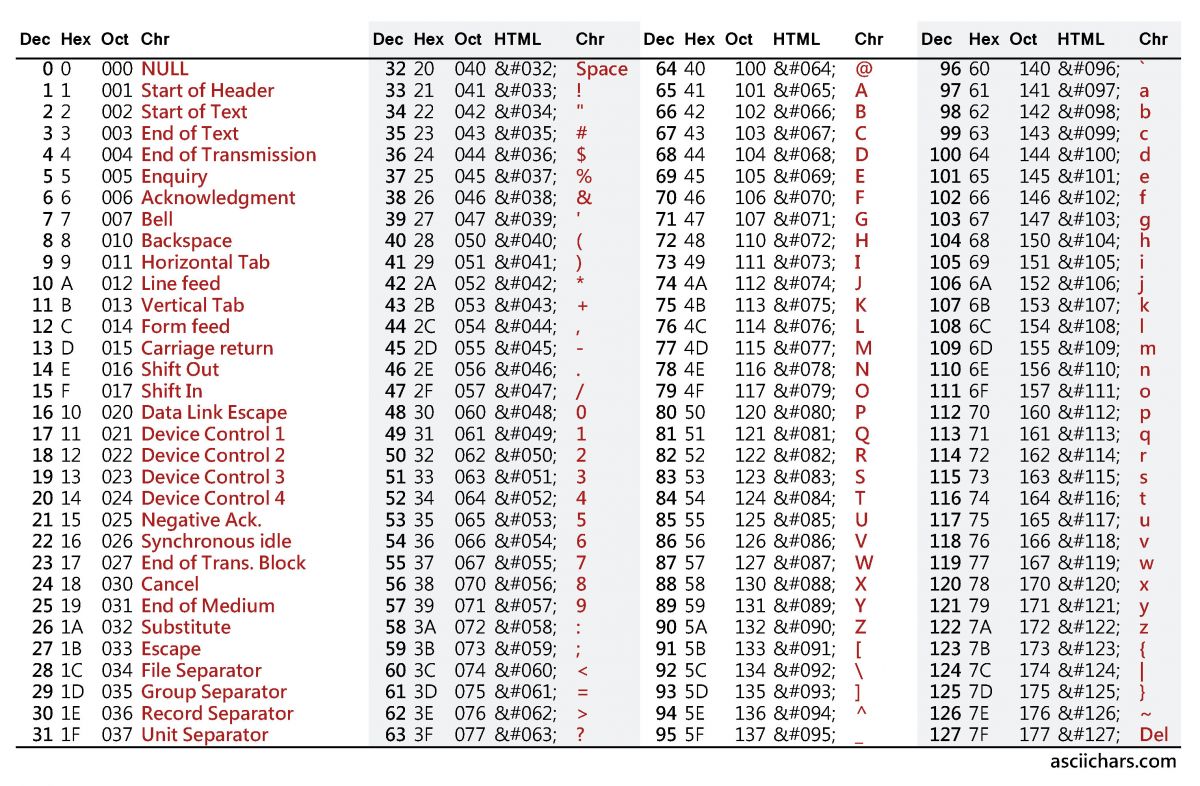

ASCII Character Code –

The code systems are fixed set of symbols (including digits) that can be used to represent information. The American Standard Code for Information Interchange (ASCII) is the most common format for text files in computers and on the Internet. In an ASCII file, each alphabetic, numeric, or special character is represented with a 7-bit binary number, so 128 symbols (characters) can be represented in this system. UNIX and DOS-based operating systems use ASCII for text files. ASCII was developed by the American National Standards Institute (ANSI).

Fig. 8: Table listing ASCII Character Codes

Unicode –

The ASCII character code can only be used to represent information in English alphabets, decimal digits and some special characters. Therefore, there was a need for a code which can accommodate characters and symbols from other languages and scripts. The Unicode Worldwide Character Standard is a system for “the interchange, processing, and display of the written texts of the diverse languages of the modern world. The Unicode standard contains 34,168 distinct coded characters derived from 24 supported language scripts.

Fig. 9: Table listing Unicode Symbols and Codes

EBCDIC Code –

Extended Binary Coded Decimal Interchange Code (EBCDIC) is a binary code for alphabetic and numeric characters that IBM developed for its larger operating systems. It is the code for text files that is used in IBM’s OS/390 operating system for its S/390 servers. In an EBCDIC file, each alphabetic or numeric character is represented with an 8-bit binary number and there can be 256 maximum symbols represented.

Fig. 10: Table listing EBCDIC Characters and Codes

In the next tutorial, learn about arithmetic operations on binary numbers.

You may also like:

Filed Under: Digital Electronics, Tutorials

Questions related to this article?

👉Ask and discuss on EDAboard.com and Electro-Tech-Online.com forums.

Tell Us What You Think!!

You must be logged in to post a comment.