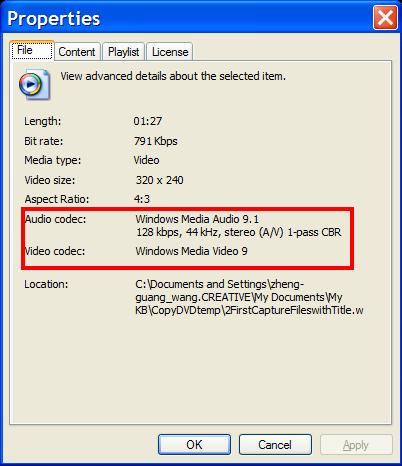

Clicking on Michael Jackson video or Mission Impossible movie leads to…

Video display, but what is so special about these files? What is the data present in these files and how a player plays these files? The answer is Codec.

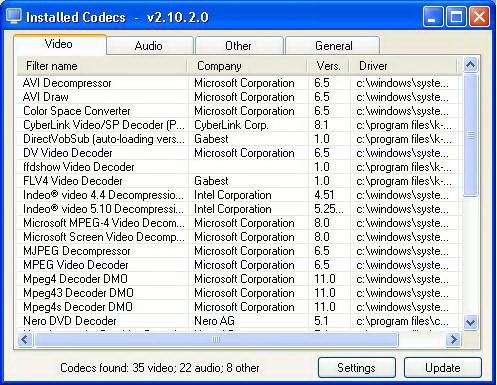

A codec is a software (at times also considered as hardware unit as set-top box attached with video conferencing system) which encodes or/and decodes a signal or digital media file such as song or video. Player use codecs to play and create digital media files.

CODEC = COder + DECoder

Codec is also referred as amalgamation of compression (encoding) function or/and decompression (decoding) function. But it is not necessary that a codec include both, some codecs only include one of them.

A codec is a critical component in the process of digital audio and video signal transmission.

Video Codec

A video codec is a combination of hardware (eg. our video players) and/or software which represents the video and audio captured by camera as digital stream of data (generally binary -0 & 1 data).

Encoders have different functionality in different capturing devices because output format is functionality of a specific system. But capture devices and encoders are different things example a capture card will create a binary stream of data which will be stored as s file whereas encoder creates a stream of data which is transferred to a second device. The second device is generally termed as decoder, and its task is to decode the stream of data and recreate the video and audio captured by camera.

Process:

To start the process, video and audio should be present and this is shot by camera (Digicam, pan tilt zoom camera etc.). The camera has a sensor (CMOS sensor for eg.) which scans the scene left to right, so as to create lines and then vertically making a grid of 480 lines per scene. This creates video frames, shot at rate of 30 frames per sec. In standard definition TV samples are taken at 720 points on the line and each point corresponds to 1 pixel. To generate the original scene these pixel points are energized. In present day television brightness parameter along with color (chroma value – amount of Red, Blue, Green) of the sampled position is passed. But today Y, Cr, and Cb – as brightness, the red signal, and the blue signal parameters are passed, as they can generate the 4th parameter automatically.

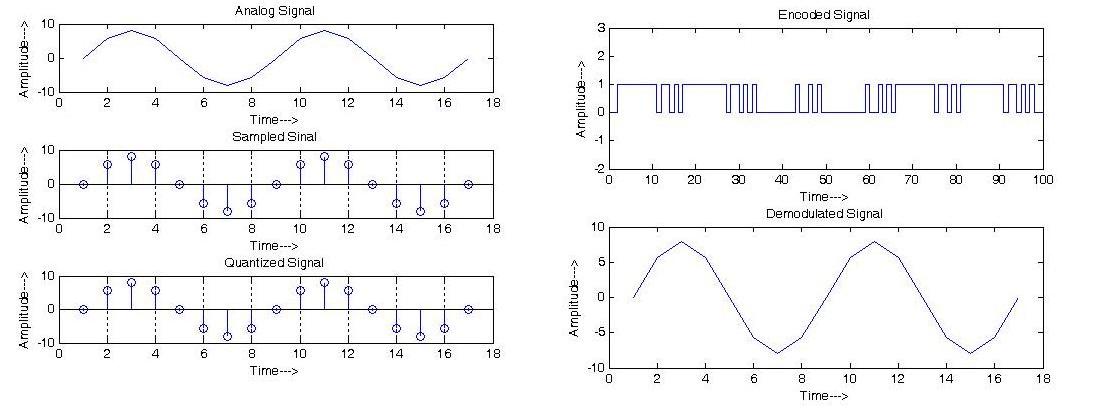

The functionality of video codecs can be roughly divided into 3 categories:

1. Camera captures the video and audio in form of signal, which is analog in nature. Codec samples the output signal. The rate at which sampling is done is known as the sampling rate. But the data related to brightness is sampled more than color because our eyes are more sensitive to brightness then color.

2. Then the analog to digital conversion takes place and each sample is converted into 8 bits generally. This stage is called quantization.

3. 3. In the last phase codec compresses the binary data stream to transmit the data efficiently, as there is lot of information related to video. Compression can be done in two ways: lossless and lossy. Lossless compression is the optimized mechanism as it uses fewer bits and also allows recovery of the original data stream. For example ziipping a file and disk compression. But lossless compression rarely allows more than an 80 % reduction in the number of data stream bits. So, in most of the cases it is never used for audio or video compression. Lossy compression is generally preferred for audio and video compression. In this technique sampled values of brightness and other parameters are stored on the base how much they vary from average value.

Filed Under: How to

Log in to leave a comment:

Lost your password?

Don't have an account? Register here