While we are all fascinated by the touchscreen-market gimmick cajoling us with its ‘Touch away’ tagline, are we actually only at an inch’s distance? This ‘no-distance’ de facto makes sense at the front end only. When given a hawk’s eye, there is another world inside waiting to be discovered, waiting to satiate your knowledge for technology.

Fig. 1: A Representational Image of Multi-Screen Touch Technology

Well, for the smart gadgets with smart people in the smart world, it’s an uncanny missing out a discussion on Touchscreen Technology, specifically undertaking the Multitouch Technology.

The multi-touch or the plural touch is an interactive technique which allows users to interact with the digital environment inside the gadget, directly or indirectly with his fingers or palm. Multi-touch devices include smart phones, touch tables, computer tables, public kiosks, walls, and ‘touchpad’. Well these inverts in touchpad bring us to a minute line of demarcation between touchscreen and touchpad. While in a touchscreen, the screen being manipulated lies directly underneath the user-interactive surface, in a touchpad the touch surface does not overlays the screen.

Evolution of Multi-Touch Technology:

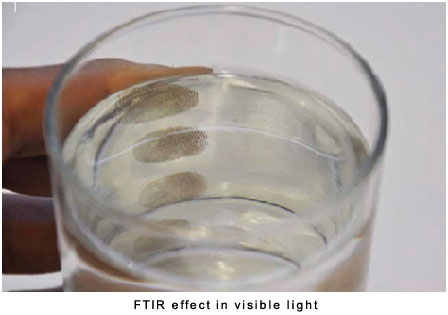

It’s not often when one devises a new technology by observing rife, regular principles of science working around. But this man did, and did it in a manner which left his audience biting their nails over his first presentation at TED. In 2006, Jeff Han; the then research scientist at NYU came up with his Multi-Touch table based on the principle of Frustrated Total Internal Reflection (FTIR). He observed that in a glass of water, the light reflects differently on the areas where the hands contact the glass. Here, visible light is entrapped inside the glass owing to total internal reflection. Whenever a finger or palm touches the glass, light diffuses or gets frustrated at the point of contact. This effect is called FTIR. In 2007, Apple Inc. came up with its revolutionary multi-touch technology in mobile phones; the iPhone. The mobile phone is a soft-touch based interface. iPhone uses capacitive coupling to sense multiple touch points. Apple’s rivalry Microsoft also released, in the same year the astounding Microsoft Surface based on DI Technology. Some other breakthrough in multi-touch technology include MERL’s Diamond Touch, TISCH, Fraunhofer multi-touch table, Microsoft wall, Thinsight, N trig andSurface2.0.

Fig. 2: A Figure Illustrating FTIR Effect in Visible Light

Multi-Touch Technologies:

Various techniques are involved in the development of human interface Multitouch devices. They are either sensor based or camera (computer vision optics) based.

· Optical Sensing Multi-touch Technology

Optical Sensing or the camera based sensing is the most widely used technique for multi-touch devices these days. Here, cameras are used as sensors to detect the presence of any object or human finger on the touch surface. The major hardware for optical technology comprise an infrared light source, cameras or optical sensors for touch detection and a Led/ LCD or projector for display or visual feedback. Well, two types of lights are involved in designing a Multitouch surface; visible light and Infrared light, both carrying an inevitable significance. Visible light which lies between 300nm- 700nm on the electromagnetic spectrum is used to illuminate the display or to provide a visual feedback to the user on a LCD or projector. However, IR light is used to locate the presence of a touch or object over the surface. The typical wavelength catering to near IR is around 700nm to 1000nm which is a little beyond the visible region perceptible to human eye. A single Led or Led ribbon is used as IR light source. The digital camera is trained to detect only the IR light (by using a filter to block visible light), avoiding any visible light to be seen by the camera. Therefore, only the human interventions on the touchscreen which are illuminated by IR are visible to the camera and not the visible feedback.

Let us now have a look at the major optical technologies governing the multi-touch era:

1. Frustrated Total Internal Reflection (FTIR) – The FTIR principle is an extension to the concept of total internal reflection (TIR). Whenever light rays enter from a denser to rarer medium at an angle less than or equal to the critical angle, TIR is said to occur. The light is then found to be entrapped inside the medium. When a separate body with a higher refractive index comes in contact with the medium containing this entrapped light, the light gets frustrated at that point and bright luminescent spots are created (blobs). This frustrated light then escapes the medium and is scattered downwards towards the infrared camera which then tracks the image of touch point and relays it further for processing. The light used here is IR rays and the medium entrapping the IR is necessarily a sheet of acrylic.

Fig. 3: A Diagram Representing Principle of Frustrated Total Internal Reflection

Layers in a FTIR system: An acrylic sheet of thickness varying from 6mm to 1mm is used. The acrylic sheets are rubbed with dry and wet sandpapers consecutively to remove scratches and to finish its sides. A baffle of wood or metal is used on the sides to prevent leakage of light from Led edges. The next layer calls upon the supply of a filtered data to the camera, i.e. only bright images (touches) should be visible to the camera. This is taken care by the diffuser. The next layer employed is specific to FTIR systems. It is known as the compliant layer. It is an additional layer added between the projector and the acrylic pane. It is a well known fact that performance of your touchscreen increases with the wetness of the touching fingers. The greasier your finger is, the better performance is obtained. Since it’s practically not possible not feasible that the fingers be wet or sweaty all the time, the silicone compliant layer dissolves the issue. It is made of material with refractive index slightly higher than the acrylic waveguide. It improves performance as in the layer is pressure sensitive, gives brighter bobs and protects the acrylic from scratches.

Contd.

2. Diffused Illumination (DI) – While the hardware for diffused illumination based multi-touch surface emulates that of FTIR, the principle used here is different. Here, the IR illuminators are also placed behind the touchscreen along with the camera. But the rear illumination of the surface does not account for uniform distribution of light over surfaces. As the name suggests, in DI technique a diffuser layer is mounted either below or above the touch surface to assuage the effect of direct light to the camera (Note: A diffuser diffuses light, hence reflects lesser). When the user touches the surface, light is reflected and an image of the touch is created. This image is sensed by the digital camera integrated at the bottom of the surface. It is to be noted that the object reflects more light than the diffuser; this makes the camera to easily detect the surplus amount of light coming to it.

Fig. 4: A Diagram Representing Principle of Diffused Illumination

One important advantage with DI technique is that it is not limited to human interaction; it can also sense the touch of objects hovering over the touch surface.

3. Diffused Surface Illumination (DSI) – This technique addresses the problem of uniform distribution of light in DI. The hardware setup is similar to FTIR except that a special type of acrylic pane is incorporated here. The acrylic used here is fitted with n number of small particles that act as mirrors which reflects light and illuminates the surface uniformly. The principle for detection of touch is similar to DI.

Fig. 5: A Diagram Representing Technique of Diffused Surface Illumination

4. Laser Light Plane (LLP) – In the laser light plane technique, infrared laser source is used to illuminate the surface. Certain parameter about the power wattage of the laser source has to be maintained so that it does not exceed the safety limits. The laser lights used here create a plane of light above the surface and not on or below the surface. When this plane of light is obstructed by an object, the light is scattered and picked by the camera mounted downwards. One major disadvantage of LLP technique is that with only one or two laser light source objects placed on the surface may obstruct light for other objects on the same surface.

Fig. 6: A Diagram Representing Technique of Laser Light Plane Technology

Sensor Based Multi-touch Technology

· Sensor based multi-touch technology

Multi-touch systems are also designed on various other sensing technologies like

o Resistance based touch surfaces

o Capacitance based touch surfaces

o Surface wave touch surfaces (SAW)

It is to be noted that these technology caters to high precision design and accuracy, hence complies to industry standards only. Since the resistive and capacitive touchscreen have already been discussed before, let us have a glance at SAW technique. The SAW hardware consist of an infrared grid. For the detection of X and Y coordinates, transmitting and receiving piezoelectric transducers are mounted on the glass faceplate. On the surface ultrasonic waves are created and directed by the reflectors. Whenever a touch is made, change in the received wave intensity is observed and interpreted by the processor to calculate the position of interaction.

Multi-Touch Software Architecture

Multi-Touch Software Architecture:

Once the hardware part is done, there is another equally important task to accomplish, that is to organize the raw input image data into processed image capable of gesture detection and hence implementation. This requires the development of multi-touch software. A generic architecture for the design of multi-touch software can be detailed as under:

Fig. 7: A Diagram Showcasing Generic Architecture for the Design of Multi-Touch Software

This framework establishes a link between the input from hardware and gesture recognition. Multi-touch programming is a two-fold process: reading and translating the “blob” input from the camera or other input device, and relaying this information through pre-defined protocols to frameworks which allow this raw blob data to be assembled into gestures that high-level language can then use to interact with an application.

1. Input Hardware layer: This forms the lowest layer of the framework. It garners raw input from the hardware in the form of video or electrical signals. The data may be taken from the optical sensor or a physical mouse.

2. Hardware Abstraction layer: At this layer the raw input data is processed through image processing to generate a stream of position of fingers, hands or objects.

3. Transformation layer: This layer converts the image coordinates into screen coordinates. Here the calibration of raw data is done and sets it ready for interpretation at the next layer. Calibration of the touchscreen for the raw data means that the software understands which image or blob on the touch sensor (here the image coordinates) indicates which spot on the touchscreen.

The process in layer 2 and 3 is combined called blob tracking. For the purpose of blob tracking raw data packets are sent to the server following a set of rules and regulations known as TUIO protocol.

4. Interpretation layer: The interpretation layer already has the knowledge of regions on the screen. When the calibrated data reaches this layer, it assigns a meaning to every gesture performed over the screen. This process is known as gesture recognition. A gesture is defined by its starting point, end point and the dynamic motion between the start and the end points. With a multi-touch, the device should be capable of detecting a combination of such gestures. The process of gesture recognition can be subdivided into three steps-

· Detection of Intention- The first step is to confirm whether the touch is made within the application window or not. Only touches inside the window are required to be deciphered. The TUIO protocol then relays the touch event to the server in order to know its application.

· Gesture Segmentation- The touch events are patterned into parts depending on the object of intention.

· Gesture Classification- Now the patterned data is mapped to its correct command.

The various modules available for gesture recognition are Hidden Markov Models, Artificial Neural Networks, Finite State Machine etc.

5. Widget layer: The widget layer generates visible output for the user. The real touch interface to the user is observed at this layer. The most popular multi-touch gestures are scaling, rotating, translating images with two fingers and many other innovative and interesting gestures.

TUIO Protocol + Touchlib

TUIO Protocol

‘TUI stands for tangible user interface in which a person interacts with digital information through the physical environment.’ The TUIO protocol is a set of certain rules which governs communication between tangible tabletop controller interfaces and underlying application layers. TUIO has now become a industry standard for tracking blob data. The TUIO protocol is encoded in Open Sound Control (OSC) format, which provides an efficient binary encoding method for the transmission of arbitrary controller data. OSC is an interoperable, accurate and flexible content format for messaging among computers, sound synthesizers and multimedia devices. Any channel supported by the OSC implementation can be used to transfer the TUIO messages. The default channel used is known as TUIO/UDP.

TUIO defines two sets of messages; SET messages and ALIVE messages. SET message gives information about the state of an object such as position, orientation and other states. ALIVE messages indicate the current set of objects present on the surface using a list of unique Session IDs. A TUIO client implementation makes use of an OSC library such as oscpack or liblo.

Touchlib

It is a free open source cross platform Multitouch framework which provides video processing and blob tracking for multi-touch devices based on FTIR and DI. Video processing in Touchlib is done through the Intel’s OpenCV graphics library.

Filed Under: Articles

Questions related to this article?

👉Ask and discuss on EDAboard.com and Electro-Tech-Online.com forums.

Tell Us What You Think!!

You must be logged in to post a comment.